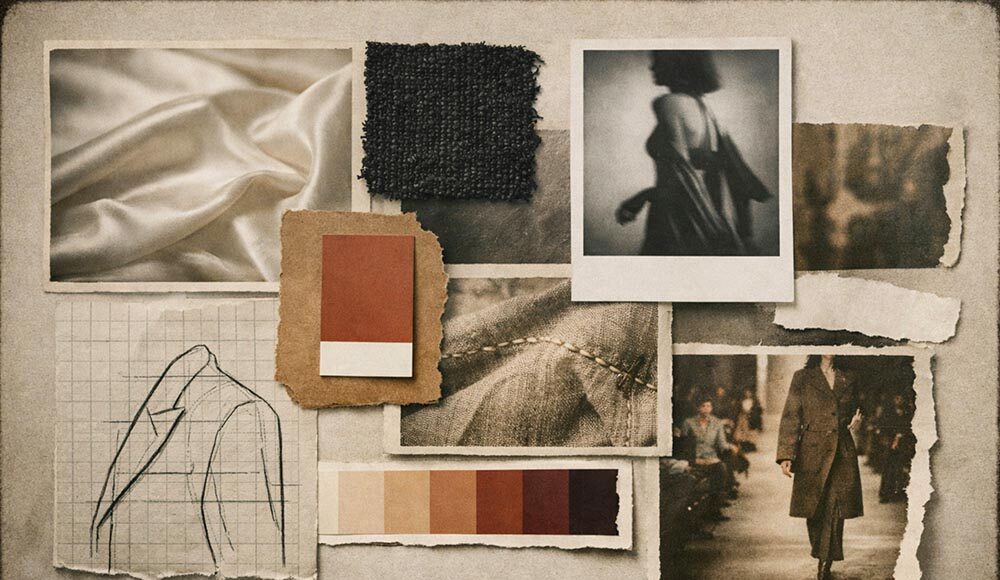

Each line begins in a similar place: a table and something cut out of an old magazine, a piece of fabric, a scribbled reference photograph, a grainy reference photograph, a color or two that the designer cannot identify, but should. The moodboard has been the first gesture of fashion as long as fashion has had a first gesture.

The ritual remains the same but it is the materials that have changed in 2026. Open Instagram any Sunday and you will see stylists, new designers, or creative directors who post images that appear at a glance as abnormally thought-through runway stills or campaign pictures.

Take a closer look and they are created – not discovered, not photographed, not dug up out of a library. They are constructed to spec, in minutes, to serve a purpose that didn’t previously have a solution. Fashion ideation has literally shifted to this place and is already occurring without the press release pomp that came with the initial group of AI-generated art.

A recent Business of Fashion and McKinsey report reported that over 35 percent of fashion leaders are already utilizing generative AI in image creation, copywriting, and product discovery. Another 2026 case study followed the implementation of an ASOS-wide generative toolkit into its design team, which allegedly reduced 75 to 80 percent of the time that it spent in early exploration. No wonder either of those figures, when you have been in a studio lately. The question of what it means by the craft on the other side of the screen is interesting.

What is driving the shift toward generative fashion ideation?

The correct response is rhythm. The fashion calendar has been shrinking to an extent that could not be imagined a decade ago. Social media requires an endless stream of visual evidence that a brand is real, that a resort and pre-fall collection has made four seasons in eight, and that the half-life of any particular trend is determined in TikTok cycles, rather than Vogue issues. It is under that pressure that traditional ideation, Pinterest boards, contact sheets of the previous season shoots, have begun to feel a little geologic. It still works. It is still able to work with beautiful results.

But it was constructed in a time-filled world. Generative technologies address a particular gap in that condensed calendar. They do not substitute taste and research. They allow a designer to view an approximation of her half-baked conception before she is sure it is worthwhile to pursue it, and thus she can give up on it more quickly, or work harder on it. That loop is important to a creative director, who is working on three campaigns simultaneously.

On the one hand, the most obvious entry point to this workflow that does not involve commitment to a specific software stack is a professional AI art and design platform. It is a platform that is capable of text-to-image generation, sketch rendering, and focused image editing within one web interface, meaning that a designer can draft a silhouette in the morning, render three colorways onto it by lunch, and still have time to send something to the studio before the end of the day. It is not a substitute for the archive. It is a draft-speed tool of what was always a slower part of the process than it had to be.

What are the hidden limitations of traditional reference gathering?

The issue with reference gathering is as follows: you can only find what has already been written. Pinterest, Tumblr, vintage shops, old magazines, personal scrapbooks – all brilliant, all limited. When you are creating something truly new, then the references you require are not yet available. You are searching for pictures of “cyberpunk silk eveningwear tailored shoulders and a liquid drape” and what you find is a dozen pictures of vaguely futuristic jackets and one that is nearly right only if it was taken in the wrong light.

The historical workaround has been to reconcile, bundle three close calls into a board and hope that your sketch artist can triangulate your will. It works, the majority of the time. It is also lost in translation, each and every time. Generative tools have crept into use here. You do not go out in search of the word cyberpunk silk eveningwear with a tailored shoulder, but feel the description and see.

The initial attempt is virtually never correct and a person that claims that it is correct has never actually used these tools. An initial prompt such as silk evening gown, cyber punk will most likely give you an answer which resembles a Halloween costume made out of plastic. Specificity, which is added in layers, is what begins to work: the fabric is described by weight and finish (liquid silk charmeuse, mid-weight, matte-sheen), the silhouette is placed in a historical context (bias-cut after Madeleine Vionnet), the photographic purpose is named outright (shot on 35mm film, volumetric side lighting, editorial not commercial).

By the third or fourth run around, you ought to have something that is a correct reflection of what you had in your mind, and then can present to your team with much less of the yes, but imagine it were more that way hand-waving that usually takes the first production meeting.

The section that no one writes about, incidentally, is that iteration loop. The finished lookbook looks inevitable with the output. The way to it is seldom ever.

How does an AI art and design platform accelerate concept development?

It is in this category that the category has actually come of age. The first wave of AI image tools, around 2022, delivered impressive one-off results but lacked consistency, specificity, and anything that dealt with the real-world sense of fashion.

The generation 2026 is different. Current systems allow a designer to input reference content (a photograph of cloth, a pose reference, a color palette) and a prompt and to produce results that honor the inputs. Style consistency – the capacity to free-lock a visual identity and reuse it in a dozen different appropriations has become table stakes.

In-painting, which allows you to modify a single aspect of an image without re-creating the entire image, is so. It is the particular feature which makes these tools not toys but tools of production. Practically, it will appear in the following way: a creative director will draw a sketch of an approximate look of a runway.

After ten minutes she has twenty rendered variations, in three fabric options and two different stylings. She selects two worth displaying and sends them to the atelier with color notes and starts again on a different idea. A traditional exploration would have required a day of Pinterest, a morning of sketch artists and more revisions by Friday.

The fact is that it is not that AI does this better than a human team. That it can do this work in a way that it is not the fourth pass but the first. The finishing work is done by the human team and it is in this area that the actual talent resides.

Real-world applications: who is actually using AI moodboards?

There is an uneven adoption throughout the industry, but some groups have adopted more rapidly than others.

Stylists and art directors

Stylists are one of the first to embrace the practice, in part due to the fact that their work is all about communication, i.e. telling a photographer, a set designer and a client exactly what the shoot will feel like when nothing has been constructed. AI-created reference frames allow a stylist to pre-set the direction of lighting, mood and wardrobe during the production meeting. Less misinterpretations on set results in less reshoots and less reshoots results in budget saved to do the things that really need a budget.

Emerging brand founders

AI moodboards have become a sort of a competitive leveler, at least to designers who are not released by a heritage house. This has been especially apparent in the more aesthetically narrow sections of the market, such as the Y2K wasteland, utilitarian deconstruction, post-internet maximalism, soft-brutalist menswear, where the reference pool is still small and the visual vocabulary has not been catalogued yet. The forgiving audience or professional photographer was something that a first-season founder used to require when pitching to investors or trade buyers. Neither comes cheap. Anthropogenically produced lookbooks, carefully branded, and treated with taste, now enables a founder to convey vision at a level of industry production value that is almost equal to that of the final clothes being produced. This is not a hypothetical precedent that has been broken open, the Spring/Summer 2023 campaign by Casablanca, which was filmed in partnership with an AI artist, opened the door publicly, and other smaller brands have been strolling through it since then.

Fashion students and educators

Schools as far north as Parsons and Central Saint Martins have begun to incorporate generative tools into their curriculum not to replace drawing and pattern-cutting but as a supplement to ideation. To students with underdeveloped hand-drawn abilities, relative to their ambition to have an idea, AI eliminates a bottleneck that once silenced good ideas. In recent industry coverage, Parsons assistant professor Jeongki Lim put it bluntly: the next generation of designers sees AI as Photoshop, the way their instructors used to see Photoshop, i.e. not a ground-breaking invention, but rather a conventional addition to the toolbox.

Established houses, quietly

The tale that no one in the marketing business will exactly verify on record: most of the big houses are already testing. In February 2026, ASOS announced a rollout of its toolkit. Mango has started to substitute some of its product detail page photography with AI-generated images. The remaining houses that are yet to be revealed are, according to most reports, internally working on it. Silence in this industry is normally developmental, as opposed to non-interest.

What makes a generated moodboard actually useful for production?

It has a neat distinction between a pretty and a working moodboard. An AI moodboard that is production ready will be based on certain technical capabilities that will distinguish it against consumer-grade tools. In order to effectively fit into the workflow of a fashion studio, the platform needs to provide four key features, including color fidelity, texture control, silhouette control, and export features.

- Color fidelity: A moodboard will never be utilized when the palette shifts across the pictures. Find software that will allow you to save a color reference and use it throughout your products. It is the one most frequently found failure point in an early AI tool and the one most obvious sign of a mature one.

- Texture specificity: The distinction between silk and duchesse satin with a mid-weight hand is the distinction between a designer’s sketchbook and the tech pack of a designer. They may be tools that can discern and maintain certain textile qualities in the referred images, which are dramatically more beneficial than tools that make generic-fabric like surfaces.

- Silhouette control: When you can not tell the garment is off shoulder, folds at the hip, and photographed three-quarter the tool is creating mood, not direction. Contemporary tools allow you to manage pose and framing either with reference images or with explicit parameters.

- Seamless handoff: A moodboard that can be viewed only in a Web browser is problematic. The platforms cleanly export to the lookbook, tech pack, or campaign deck format your team actually works with, and allow you to save various iterations, compare them and edit them without losing track of the idea.

Pin those four, and the moodboard created will warrant its place in the workflow. Miss one of them and you will have more time correcting the tool than doing the work by hand.

Frequently asked questions

- Are AI moodboards replacing designers?

AI moodboards do not take the place of designers, but change the designer’s task, when manually collecting the references, to a creative selection, editing, and selection. The art is not obsolete, but it evolves upwards and the scarce resource is taste and not technical production. According to industry data provided by Business of Fashion and McKinsey, over 35 percent of fashion executives already deploy generative AI in creative activities, and those designers who have succeeded in such a setting do so by viewing AI as a quick junior partner instead of a threat. - Can AI-generated moodboards be used for commercial campaigns?

AI-created moodboards have commercial applications in commercial campaigns when the medium provides commercial use privileges and the human creative engagement is significant to the extent of proving authorship. The legal system now incentivizes a documented creative direction, original prompting and substantial post-generation editing instead of simply machine generated output. The campaign of Spring/Summer 2023 at Casablanca and the rollout of the product detail page at Mango are two comparatively very different precedents. The licensing status of the platform should be verified prior to making a commitment and documentation of the direction that you are taking should be done because IP standards regarding AI images are in transition. - Do I need to be technical to use an AI moodboard tool?

The interface of an AI moodboard tool is not run by a code or a command line but by natural-language prompts and uploading reference by drag and drop. The actual skill is the one that you already possess: you understand what a properly composed image is, you can tell how a mood should be described and when the output is ready. It is precisely because of this taste transfer that fashion people are more likely to pick up such tools faster than engineers are, simply because syntax must be learned. - How do AI moodboards compare to Pinterest for concept development?

Although the AIs moodboards and Pinterest may appear aesthetically similar, their uses in the ideation process differ radically. Pinterest is a retrieval tool, meaning that it will show imagery, which is already on the internet, whereas an AI moodboard is a generation tool meaning that it generates imagery according to a prompt. The majority of creative directors are today combining the two – Pinterest to do a large-scale trend-scan and archival research, and AI to imagine a concept that has never been created, and thus cannot be located anywhere in an archive. - What should I look for in a professional AI moodboard platform?

An expert AI moodboard system must provide color consistency across variations, targeted control over fabric and texture, consistent silhouette direction and a clean export route into your current production pipeline. One or two of these are commonly provided by free consumer-grade tools. The paid platforms that have a subscription fee will provide all four and will be able to do it much quicker with the help of the subscription than manually, and this is what makes them get into the studio.

The final cut: where human curation meets generative speed

The moodboard has never been the final document – a means by which a designer can express taste preceding the existence of the object of his or her taste. The media is no longer a cut-and-paste, but a digital, and it will become even more generative. It is not the judgment that is needed to create one that is good.

A generative tool is capable of generating a thousand variations of an idea in an afternoon. It does not tell you which of these is the collection. The choice of what goes to the board and what goes to waste, what gets cut and what gets tossed, is the labor of the designer, and it has become, not cheaper, but more valuable, with production itself having become quicker. The rare resource is still taste. All the rest is execution.

Those designers and stylists who are ahead in 2026 are the ones who are viewing AI as an extremely quick assistant that has no opinions. They feed it references, beat it, discard ninety percent of its run, and retain the ten percent of it that refines the idea that they were already making. That does not pose a threat to the craft. It is the trade, and the hard labor comes in till noon.